FAQ: What is the architecture of MageStack

So I was listening to a podcast recently that touched on some facets of MageStack likening it to VPS hosting, is it just a VPS?

In a word, no. Not even in the slightest. There's a high level overview of MageStack in our introduction to MageStack article - but looking back, it probably isn't clear enough to outline what MageStack is from an architectural perspective.

Starting with an overview

MageStack is a simple, very complex, but simple product. Now brace yourself, this is a fairly complex deep dive, so I'll do my best to explain.

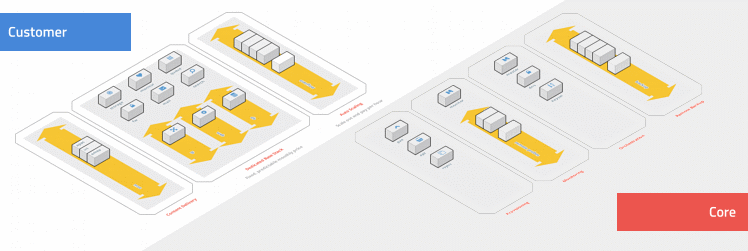

| Customer | Core |

|---|---|

| On the customer side, the essence of what it is makes it simple is root access to a single Debian Linux installation where you administer your cron jobs, upload your files, view all your logs, restart services and install any software you wish. Behind this is a complex relationship of containers, real-time file replication and load balancing across both the permanent physical hardware in your stack and overflow capacity that you've scaled into. I'll touch on this more later. |

At the core, there's a network of systems powering and orchestrating all customer containers; centrally aggregating logs for WAF analysis, centrally and remotely monitoring services and site status, provisioning and auditing the infrastructure across our global data-centres, storing configuration and performing remote backups. This highly redundant and distributed system is what allows us to provision new stacks, update all customer stacks at the click of a button and view the status of tens of thousands of running containers. |

Where it gets complex is trying to perhaps understand the relationship of what the containers do and how that is bound (or in reality not bound) by the physical infrastructure available.

Types of hosting for scalable PHP applications

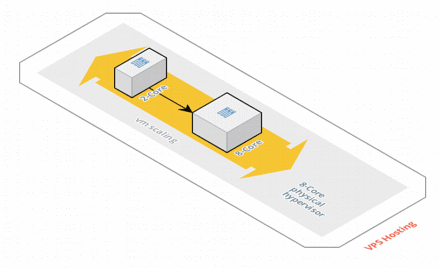

Traditional VPS Hosting

Let me start by saying that we don't do anything like this at Sonassi - but I fear the person asking the original question may have interpreted it this way.

With traditional VPS hosting you have a physical hypervisor of a given specification (I've put 8-Cores above), then within that you can use VPS (virtual private servers - also known as containers) or VDS (virtual dedicated servers). Where using either the VPS/VDS, you originally constrain the resources of the guest to be smaller than that of the total resource available.

Then, when you have a scaling event, you dynamically resize the guest to allow it to use more resources when it needs it and shrink it back down when you don't need it.

There's a simple elegance as you can potentially only pay for what you need and scaling can be quite quick. However, there are a number of downsides,

- It is a shared physical machine, where other virtual servers could disrupt performance on the host and in turn affect your guest

- Dynamically re-configuring the software on the guest to suit greater CPU/RAM limits is a challenge

- Horizontal scaling is limited to that of the technology available (eg. vmotion)

I've only described a very primitive approach, but there are other variations on this like having separate roles per virtual server (eg. web, db, mail etc.), or even dedicating resources (rather than sharing the underlying physical server) - but the more abstraction, the more the complexity and the more dedicated resource, the greater the cost.

For anything but the smallest possible deployments, this is entirely unsuitable for a scalable PHP application.

MageStack

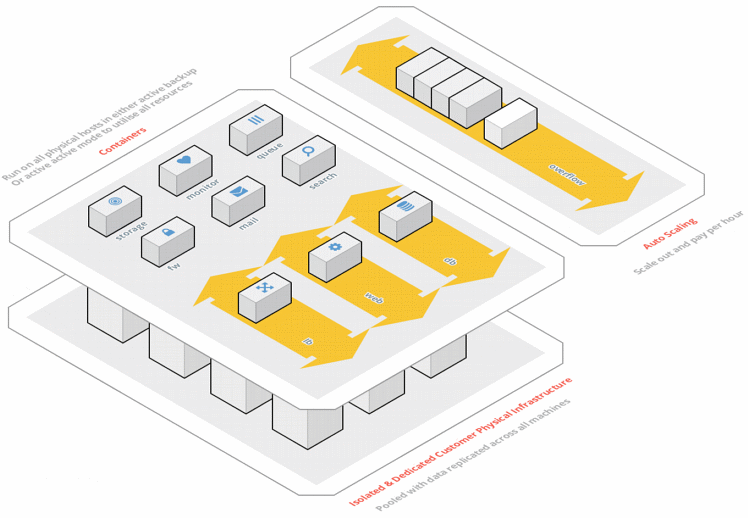

Bear with me here, I've tried to portray in diagram format what we do on a per customer basis, but I appreciate it probably isn't clear, so let me explain.

Each customer has their own stack where the resources are completely dedicated to them (in my example above, there are 12x physical machines in the stack). That means a private and dedicated network segment, dedicated firewall policies and dedicated underlying servers. A stack is made up of one, or many dedicated servers (of any specification). Take our build tool for example, you'll see you can build a stack with a single physical machine in it - or, you can add a second physical machine in the stack (or a third, or nth) to provide a robust underlying framework.

This dedicated resource is all yours, not shared with any other, your data is replicated in real-time amongst all physical machines - and the containers themselves are replicated across all the machines.

The containers themselves are isolated micro-services, given shorthand names for the roles they undertake. We had a choice whether we completely isolated each container to its purpose (ala Docker) or a hybrid model that combined complementary services within a single container (ala LXC/Linux VServer). Now, Docker is great, its a very useful tool, but in production at massive scale, it can become an interesting beast; carrying some security risks, difficulty in fault diagnosis and performance issues.

Its a much bigger discussion than this post, but for these (and many other) reasons, we do not use Docker on MageStack, but we do use role-based containers. Its not that Docker is bad, its just not appropriate for the high performance, reliability and security SLA's we set.

| Name | Role |

|---|---|

| lb | Load balancer (WAF, Caching, SSL termination) |

| web | Web server (Static/Dynamic content delivery) |

| db | Database server (MySQL, Memory Cache Stores) |

| Mail server (Outbound transactional emails) | |

| search | Search server (Sphinx/Elastic/SOLR) |

| monitor | Monitoring server (ELK, Graphing, Service monitoring, Logging) |

| queue | Queue server (RabbitMQ) |

| acc | Access server (Cron jobs, SSH/FTP/SFTP etc.) |

Here's some examples of what that might look like,

| Number of physical servers | Containers Running |

|---|---|

| 1 | 1x Load balancer container 1x Web container 1x Database container 1x Mail container 1x Search container 1x Monitor container 1x Queue container 1x Access container |

| 2 | 2x Load balancer containers (Active/Backup or Active/Active) 2x Web containers (Active/Active) 2x Database containers (Active/Backup, Master/Slave or Master/Master) 2x Mail containers (Active/Backup) 2x Search containers (Active/Backup) 2x Monitor containers (Active/Backup) 2x Queue containers (Active/Backup) 2x Access containers (Active/Backup) |

| 10 | 10x Load balancer containers (Active/Backup or Active/Active) 10x Web containers (Active/Active) 10x Database containers (Active/Backup, Master/Slave or Master/Master) 10x Mail containers (Active/Backup) 10x Search containers (Active/Backup) 10x Monitor containers (Active/Backup) 10x Queue containers (Active/Backup) 10x Access containers (Active/Backup) |

Where are the containers running?

Consider all the physical hardware available as being one big cluster; a huge pool of available resource where MageStack's monitoring system will continuously evaluate what physical machines are best suited to do which roles. It is looking at available CPU, RAM and network activity to decide where each container is going to thrive.

Eg. In a two server stack, the most common way MageStack orients the containers is like so. Where it will take full advantage of all resources, from all stack members, all the time - nothing is sat idle or overly stressed.

| Physical Server 1 | Physical Server 2 |

|---|---|

| lb1 | lb1 (backup) |

| web1 | web2 |

| db1(backup) | db1 |

| search1 | search1 (backup) |

| queue1 | queue1 (backup) |

| monitor1 | monitor1 (backup) |

| mail1 | mail1 (backup) |

| acc1 | acc1 (backup) |

And if one of those servers fail, the remaining server(s) bring up the backup containers and provide continued continuity of service.

Provided you have at least two physical machines in your stack, you'll benefit from high availability.

The access server

This is an interesting container - as its the most fundamental one and your key point of access (as the name would suggest!) as a customer. The access server mounts your stack's dedicated network file system - which is what allows you to write to all nodes simultaneously, in real-time, read backups, logs etc.

It is a full blown Debian Linux environment, where you've got unrestricted root access and the ability to do what you want. This massive level of flexibility, whilst abstracting away the sheer complexity of the rest of the stack, is what makes MageStack so elegant and powerful. All your deployment tools and methods will happily work, you can execute any scripts in any language you wish (Bash, PHP, Python ... whatever you like!) - then environment is there to service you, just like a single, dedicated server would do.

So picture that, your interaction is as simple as what it would be to work on a single, dedicated server; but behind the scenes, in real-time, there is replication, high availability and load balancing - and you, nor the application you are hosting need be aware.

Overflow servers

So you'll have noticed these were snuck in on the right hand side of the diagram and subtly tagged with the line "auto-scaling". Overflow servers are the secret behind being able to scale out horizontally and scale out massively. I touched on vertical scaling very briefly when outlining traditional VPS hosting; where you can scale to the limit of the physical server your virtual machine is on. Clearly this is limiting and doesn't really allow the massive scaling you may want.

Overflow servers are the solution. There's a huge pool of overflow servers in our network sat idle at any given time that at a moments notice (be it automatic, on a schedule or manually) attached to your stack and instructed to take on a certain role. Here's an example,

- You have a spike in web traffic

- The monitor container detects that the load (or expected load) for the web container is going to exceed the amount of resource available

- It adds an overflow server (or many) and instructs them to spin up web container clones for your stack

- The new web containers mount your network filesystem, load your configuration and start running

- The load balancer detects the new web servers and immediately redistributes traffic

In terms of a timeline - all of the above can happen within a 2.5 minute window; and the overflow can run for as little as 1 hour - and you'll only be charged for 1 hour.

An overflow server could start a web container, or a load balancer container or any other container the stack believes needs to be scaled out. It makes resource available for a given role that your stack may have saturated, allowing you to massively scale out, despite potentially starting with a single physical server in your stack.

Overflow servers offer massive capacity, massive horizontal scaling and total cost control. MageStack uses overflow servers to reinvent dedicated hosting into a granular-pricing, instant, on-demand and horizontally scaled resource. The elasticity of the cloud, but with the low cost, performance and reliability of dedicated.

Large scale visibility, small scale risk

There's two possible ways to try and architect something like MageStack,

- A huge pool of resource for all customers, all part of the same platform (all the eggs in one basket)

- A series of small pools, running an identical OS and centrally orchestrated - but completely isolated (eggs are in totally separate baskets)

Ask any eager and ambitious system administrator and they'll pick option 1 - its the holy grail of scalable computing design, one huge resource pool. But its also a massive single failure point, pooling everything together carries a huge risk that one facet of this huge and complex stack could take everything offline.

Needless to say, we picked option 2. All our customers are separate; physically isolated from another with nothing shared. So if one customer's stack fails, spikes in load or misbehaves - the scope of that is limited to that single customer. But, because all customers run the same technology stack, we have phenomenal visibility to find bugs in MageStack, to deploy new features or push security updates.

We maintain a single operating system, MageStack - whilst keeping all customers totally isolated.

Fully micro-service based architecture ... on dedicated hardware?

So if you've followed me so far, you've grasped that there are several separate containers all taking on different roles; that get automatically placed on any given physical machine in the stack's resource pool - and when additional resources are needed that exceed that of the stack, new containers spin up on overflow servers, attach themselves to the main stack and take on the additional load.

You get the unrivalled raw power of dedicated infrastructure, the full accountability and control of a provider that owns the hardware, owns the network and owns the data centres themselves - and you the massive scalibility that MageStack can offer by leveraging a huge pool of overflow servers.

No resources are shared with any other customers and your day-to-day infrastructure is sized accordingly to your needs. There's no unpredictable pricing model, just the traditional price plan for dedicated hosting that you know and love - combined with the capacity that overflows offer you.